With legacy ETRM systems approaching their next upgrade cycle, oil and gas companies should turn their attention towards taking a postmodern approach to deploying their next solution featuring cloud-based, affordable and Agile-enabled capabilities.

Due to advanced pricing and capacity planning capabilities, the energy trading and risk management (ETRM) system continues to fill critical functional gaps in the recording, processing and settling of commodity transactions across the oil and gas industry. However, many of these solutions are showing their age. They’re built on older technologies, which haven’t adapted well to changing business conditions, nor have they facilitated accelerated deployment methodologies like Agile. As a result, new ETRM implementation costs continue to rise, as do ongoing support and maintenance costs.

Software companies for many non-ETRM solutions within oil and gas have turned to “postmodern” concepts like cloud-centric architectures and digital strategies to mitigate costs, but the technology architecture for most commercially available ETRMs aren’t conducive to leveraging these concepts. Understanding the advantages of these “postmodern” capabilities is critical to developing a strategic architectural approach, which could provide a scalable, reliable, affordable and fit-for-purpose technology stack that enables more effective trading and risk management capabilities.

ETRM Technology Challenges: A Historical Perspective

ETRM systems have evolved since their beginnings as custom software solutions developed internally by trading houses. These early solutions focused first on providing the ability to record trades (initially just financial instruments, then expanded to physical trades), and then further expanded to provide a framework for capturing the full transactional life cycle through financial settlement. Over time, these bespoke systems evolved and were “productized” into many of the software offerings that have been mainstays of the ETRM software industry over the last two decades.

Software providers have faced a difficult challenge with expanding that initial functional footprint. While derivative trading concepts can be complex, the transaction recording is straightforward and has only become less complex as financial market regulations have increased over the past 20 years. Physical markets, however, can have several important nuances by the combination of commodity and mode of transportation that drive different requirements critical to successful trading within each market.

As most of the major ETRM systems were originally custom solutions, their technical architectures are based on technologies common at the time of their initial development (recall PowerBuilder or Delphi, anyone?). These solutions have gone through some re-platforming over time, but much of their initial architecture remains intact with technology investment into adjacent commodities without much consideration of “postmodern” architectural advantages.

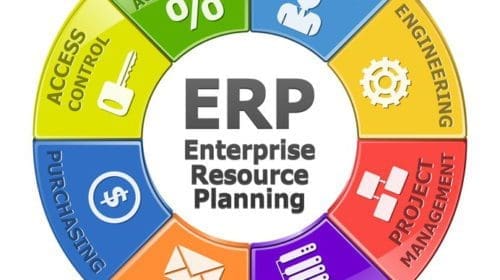

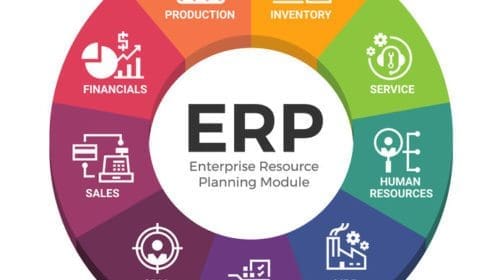

The resulting products provide a robust set of capabilities for particular commodities, but are poor fits in others (e.g., one might work well for exchange-traded derivatives, but poorly for crude cargos). An additional burden of this legacy platform is that older technology architectures are difficult and expensive to integrate into other systems, such as enterprise resource planning (ERP), due to their monolithic architecture. Furthermore, ETRM transaction processing capabilities result in structured business datasets that are often “trapped” within the ETRM’s datamodel, unable to be easily consumed by the enterprise for advanced analytics or other solutions.

Recent trends of consolidation among ETRM solution providers has reduced the number of vendors, but not the number of product options. Technology updates continue, but they’re often years behind schedule and even further behind market relevancy in a rapidly changing environment. Investment roadmaps for many ETRM products are unclear to current and prospective customers.

ETRM Technology Landscape Is Evolving

The challenges outlined above are not unique to the ETRM space. ERP software vendors, integrators and customers have grappled with similar sets of issues for several years. Gartner, for example, has explored this challenge in considerable depth in its research of the ERP market, and even coined the phrase “Postmodern ERP” to describe the architectural approach of leveraging multiple systems, and/or system components, to satisfy differing administrative, operational and control requirements.

In the ETRM sector, consolidation of the major vendors has reduced the number of players, but it hasn’t reduced the number of potential software products, nor led to increases in product investment. However, a new class of entrants have made meaningful strides in overcoming some of the historical constraints of ETRMs through postmodern technology architectures and modern implementation methodologies.

Key characteristics of a postmodern solution include the following:

- Cloud First – Going beyond simply hosting legacy on-premise solutions or offering software as a service (SaaS) versions to provide true cloud-based digital platforms.

- Modular – Products developed from the ground up to deliver independent capabilities and architected to encourage integration via exposed application programming interfaces (APIs).

- Specialized – User-centric designs engineered specifically to deliver an optimized user experience for certain functions unique to a market or commodity.

- Agile Enabled – Straightforward configuration options, combined with their modularity and specialization, allows for rapid and iterative implementation.

Solutions with these capabilities promise the following:

- Lower Implementation & Operating Cost – Reduced total cost of ownership driven by shorter implementation cycles and the advantages of scaled cloud platforms.

- Accelerated Roadmaps – Development cycles by component lead to faster deployment and adoption of new functionalities and capabilities.

- Open/Accessible Datasets – Through the application of advanced technical architectures, data accessibility and extendable datasets can be achieved allowing the enterprise to mine value out of their business data.

A New Way of Looking At The Problem – Postmodern Architecture

How can a company looking to leverage these characteristics deliver solutions required by the business and yet achieve the outcomes listed above?

One approach is to basically componentize the entire ETRM system into a logical unit by commodity or business line. Commodity Technical Advisory LLC suggests as much in their recent whitepaper where they advocate implementing commodity-specific ETRM solutions as a means to achieve optimization for commodity-specific business processes, enable greater user satisfaction and avoid complexity of managing multiple commodities in a single, multi-commodity ETRM system. However, this doesn’t go far enough with today’s technology.

Taking this approach gets your enterprise closer by promising Cloud First and Specialized capabilities, but the reality is that you’ll be left with a solution that’s too broad and costly to upgrade consistently, further perpetuating the issues with current solutions—they’re too shallow in many functional areas. Only through true Modular and Agile-enabled solutions will you be able to accelerate the deployment cycles to keep up with today’s rapidly changing business requirements.

The following graphics show the differences:

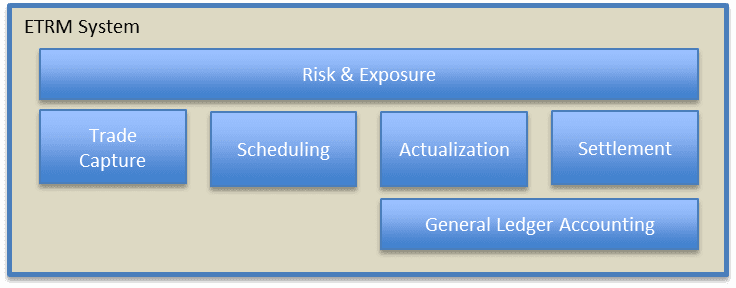

The Current Architecture of ETRM Systems:

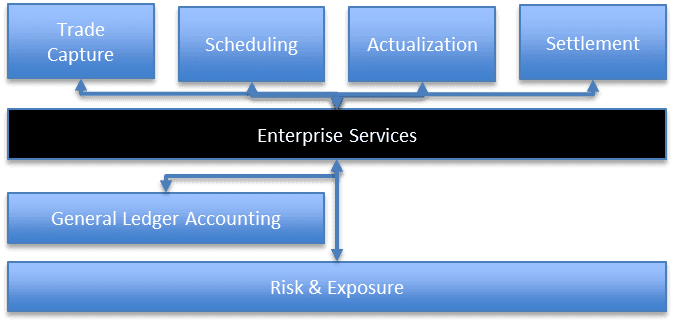

Postmodern ETRM Architecture:

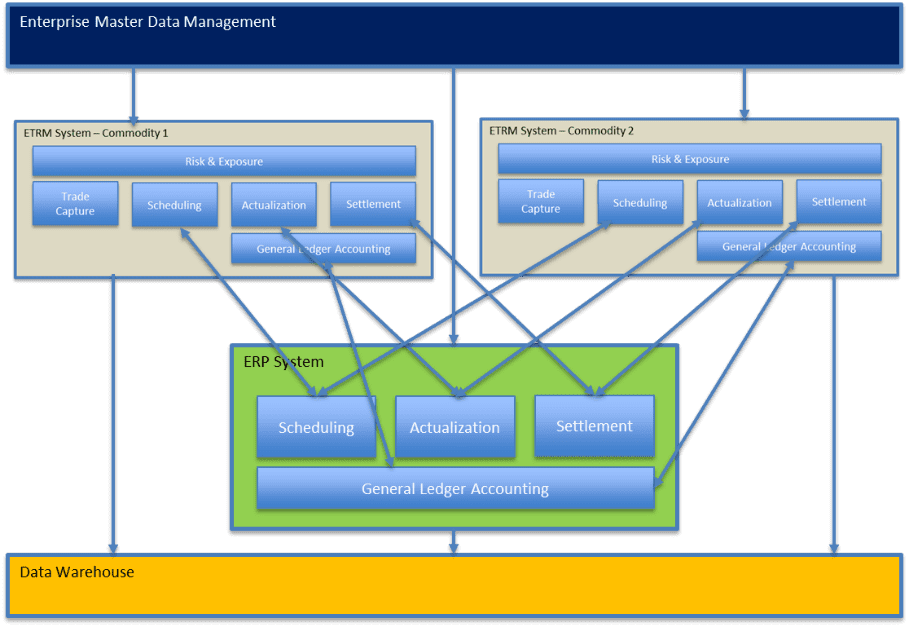

Looking at these two simple models might not make a reduction in complexity immediately apparent, but overlaying an ERP system interaction in a multi-commodity environment makes this clearer:

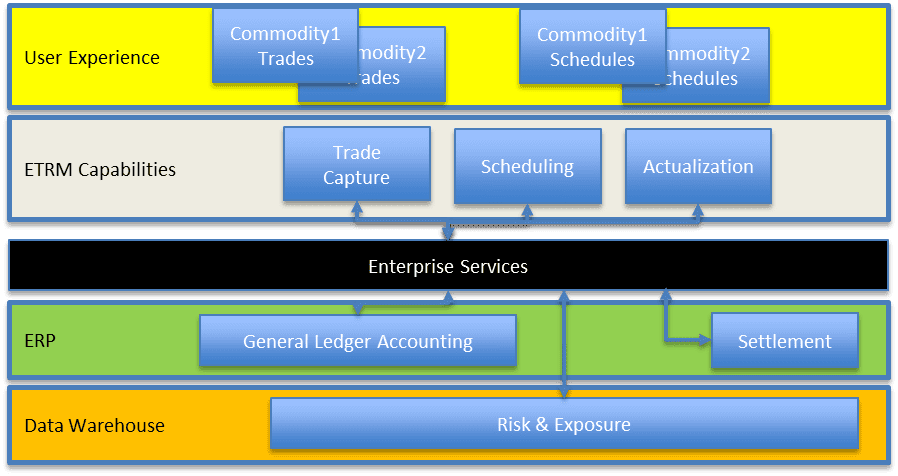

Now Consider a Postmodern Approach:

The Postmodern architecture reduces the complexity of your overall framework and provides a pathway to rapid innovation and adaptation to changing business requirements. Getting there requires rethinking your approach to ETRM technology and finding experienced implementation partners to help unlock the capabilities in these areas.

Conclusions & Recommendations

As we examined in “What You Need to Know Before Starting an ETRM Implementation Process”, it’s important to solidify the foundational business strategy and policy decisions underpinning the ETRM program of work before starting a technology project. The next order of business should be to develop a well-reasoned trading and risk solution architecture that:

- Utilizes a well–defined information architecture to build on harmonized master and reference data providing for enhanced analytics, machine learning and AI.

- Leverages a component-based technical solution to streamline and accelerate system integration and enable cross-application process orchestration.

- Is optimized for the particular function(s) supported to accelerate user adoption and satisfaction while paving the way for automation and data integration (e.g., robotic process automation “RPA”, APIs, etc.)

With the commercial and risk considerations defined and a target architecture in place the stage is set for a successful ETRM solution selection and implementation that can satisfy the business’ demand for flexibility, cost effectiveness and long-term value contribution.

Stephen Bell is a Managing Director in Opportune’s Process & Technology practice. Stephen is an industry veteran with over 20 years of experience working for many of the largest oil and gas companies in the world. His experience includes developing IT solutions for downstream, chemicals and upstream business sectors. During his career, he has led several successful efforts to extend and innovate on base SAP capabilities to meet the business requirements across the petrochemical supply chain, including trading, risk management, primary and secondary distribution, fuels marketing, retail, lubricants pricing, crude marketing and sales, and feedstocks and chemicals. Stephen holds a B.S. in Economics from the Wharton School of Business from the University of Pennsylvania.

Steven Bradford is a Managing Director with Opportune’s Process & Technology practice. He has over 23 years of leadership experience in business transformation, systems/technology implementation, business process and controls improvement. His primary focus has been on the application of technology to improve the end-to-end trading/commercial and risk management functions, improving operational efficiency and enabling better commercial/business decision-making through improved processes and data. He has delivered major programs and business transformation initiatives for several clients, including global integrated oil companies and independent U.S. refiners/marketers. Prior to joining Opportune, Steven was a Partner at Accenture within their U.S. Energy Practice and then served as a Principal at KPMG within the Commodity/Energy Risk Management Group. Steven has a B.S. degree in Industrial Engineering from Purdue University.

Kent Landrum, Managing Director in Opportune LLP’s Process & Technology practice who leads the firm’s Downstream Sector, has 20 years of diversified information technology experience with an emphasis on solution delivery for the energy industry. Kent has a proven track record of managing full life cycle software implementation projects for downstream and utilities companies, including ERP, ETRM, BI, MDM, and CRM. Prior to rejoining Opportune, he served as a Vice President & Chief Information Officer for CPS Energy. Kent holds a B.S. degree in Computer Science and Economics from Trinity University and a master’s degree in Organizational Development from the University of the Incarnate Word.

Oil and gas operations are commonly found in remote locations far from company headquarters. Now, it's possible to monitor pump operations, collate and analyze seismic data, and track employees around the world from almost anywhere. Whether employees are in the office or in the field, the internet and related applications enable a greater multidirectional flow of information – and control – than ever before.